San Francisco, United States, January, 2026 — Eurotoday Newspaper, As artificial intelligence becomes a foundational layer of the global economy, infrastructure decisions are now as consequential as software breakthroughs. In San Francisco this week, OpenAI detailed a long-term plan to rein in the soaring power demands of advanced computing, placing the OpenAI energy strategy at the heart of its future operations. The announcement signals a broader shift in how leading technology firms balance rapid innovation with economic discipline and environmental responsibility in an era of unprecedented AI expansion.

The Growing Energy Challenge Behind Artificial Intelligence

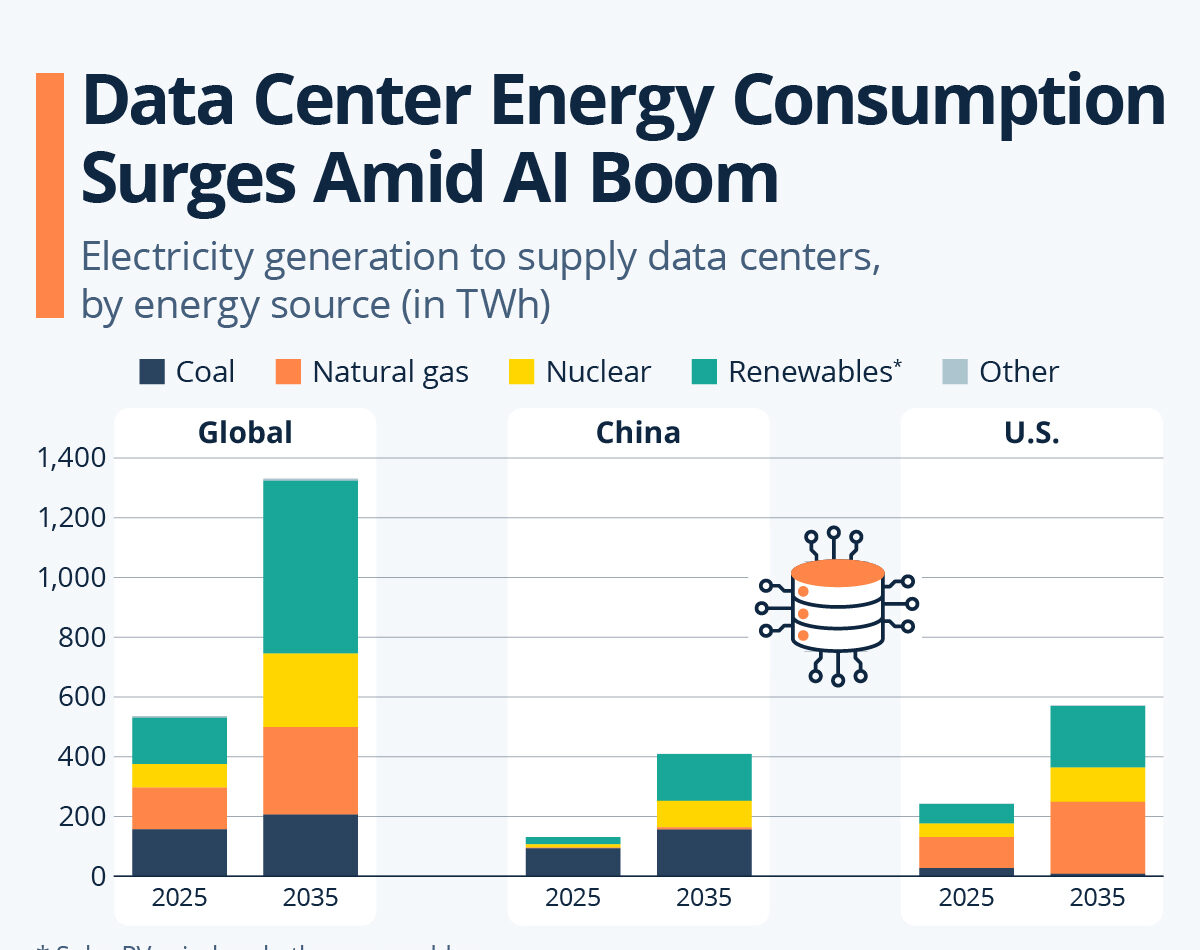

Artificial intelligence systems have evolved far beyond experimental deployments. Training and operating large-scale models requires immense computational throughput, translating directly into rising electricity consumption. Across the technology sector, energy availability and cost have emerged as binding constraints on growth.

For OpenAI, leadership acknowledged that unchecked expansion would strain grids, inflate operating budgets, and attract regulatory scrutiny. The OpenAI energy strategy is designed to confront these risks head-on, ensuring that infrastructure growth remains sustainable as AI adoption accelerates worldwide.

Why Infrastructure Now Shapes Competitive Advantage

In earlier phases of AI development, innovation was defined primarily by model architecture and data scale. Today, infrastructure efficiency increasingly determines who can scale reliably and affordably. Energy management has become a differentiator rather than a background consideration.

By elevating efficiency to a strategic priority, the OpenAI energy strategy reframes infrastructure as a source of competitive strength, enabling the company to deploy powerful systems without proportionally increasing costs or environmental impact.

Hardware Decisions That Influence Power Demand

One of the central pillars of the plan involves hardware selection. Specialized AI accelerators can perform targeted workloads far more efficiently than general-purpose processors, delivering higher output per watt.

OpenAI executives explained that aligning workloads with optimized hardware allows the OpenAI energy strategy to reduce wasted computation, especially during training cycles that traditionally consume enormous amounts of power.

Software Optimization as a Hidden Energy Lever

Hardware alone cannot solve the power challenge. OpenAI is investing in software techniques that reduce unnecessary calculations while preserving model quality. Adaptive precision, streamlined training pipelines, and intelligent workload distribution are among the tools being deployed.

These measures embed efficiency directly into the development lifecycle, ensuring the OpenAI energy strategy operates continuously rather than as a one-time infrastructure upgrade.

Cooling Systems Undergoing a Redesign

Cooling accounts for a significant share of

Leave a Reply