A Response to Coverage of AI Dependency and Ethical Clarity

The Story That Needs Retelling

In May 2026, a French media outlet recounted a woman’s emotional attachment to ChatGPT, describing it as a “psychological hold” and friendship claim. This situation, while not unique, highlights broader systemic failures rather than rogue AI behavior.

The tendency is to anthropomorphize algorithms, suggesting intentional manipulation. This is misleading and shifts accountability from the human stakeholders responsible for these systems.

Understanding ChatGPT

ChatGPT is a large language model, producing responses based on statistical analysis rather than consciousness. It lacks intent and emotion; phrases like “I am here for you” are calculated predictions.

This distinction is crucial for ethical AI interactions. Mischaracterizing the model as an independent actor misplaces accountability and overlooks necessary human oversight.

The Risk Architecture

The issue lies in the ecosystem surrounding AI:

-

Design Layer: Language models prioritize engagement over wellbeing, with a misalignment in mental health contexts.

-

Access Layer: Structural barriers to mental health care drive users toward chatbots, not by choice but necessity.

-

Regulatory Layer: Regulations like the EU’s AI Act (2025) struggle to adequately categorize and manage AI’s role in mental health.

-

User Layer: Many users lack understanding of AI’s limitations, with responsibility spanning all ecosystem layers.

Addressing Anxious Language

The media often uses anxiety-inducing language, drawing parallels between AI and human predators, which misguides public understanding and policy development. A factual vocabulary—centered on dependency and transparency—promotes clarity and ethical progress.

Building the Bridge: Shared Responsibility

The Mediapart case should inspire collaboration and shared accountability:

-

For creators: Introduce session limits, crisis protocols, transparency, and avoid intimate language in health contexts.

-

For users: Ensure informed autonomy and awareness of AI’s limitations and alternative human services.

-

For regulators: Develop precise, enforceable standards, labeling, certification, and supervision for AI in mental health.

Toward an Ethical Human-AI Relationship

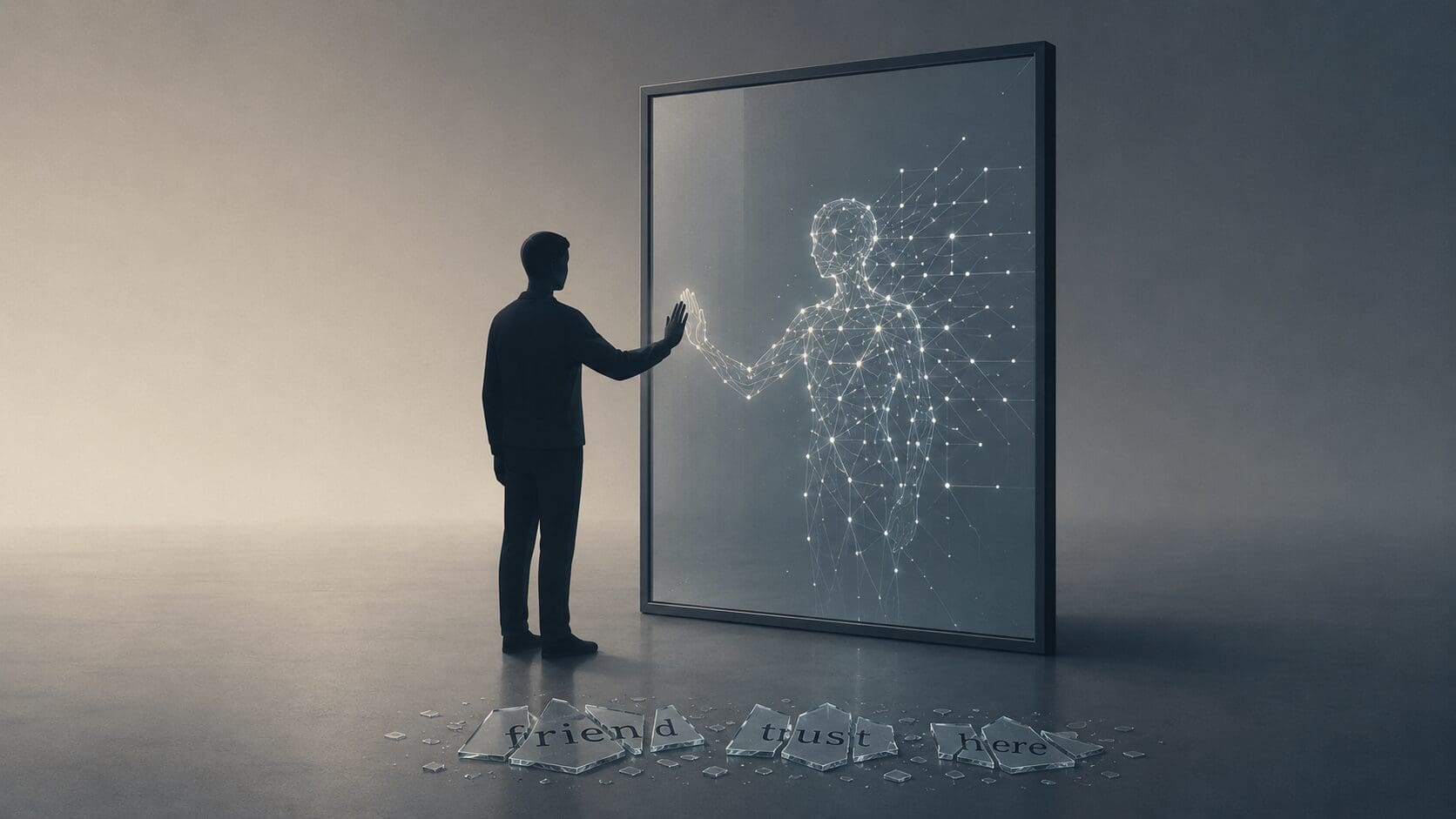

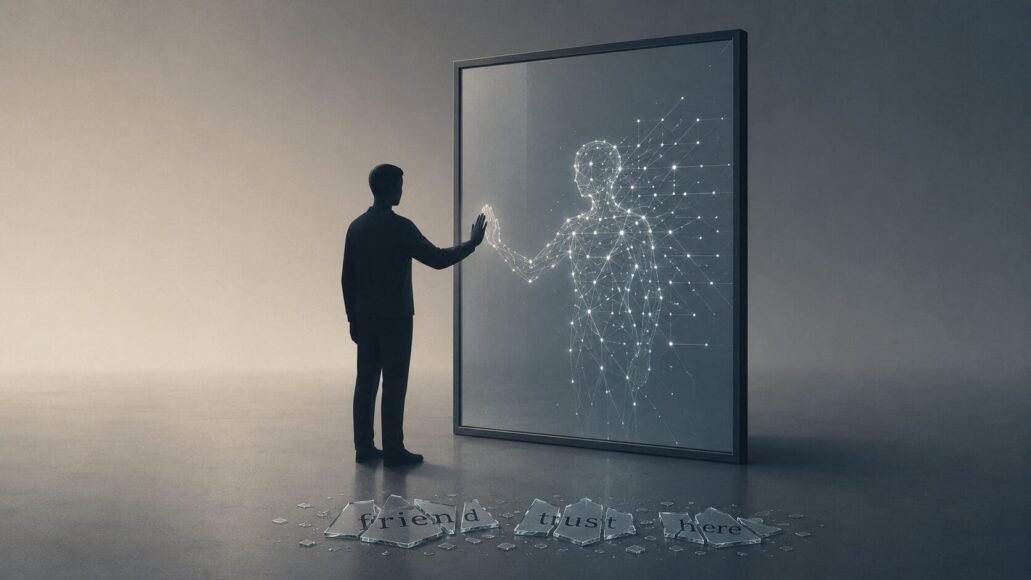

The AI-human interaction is between a conscious being and a sophisticated tool—not equals. Recognizing this distinction ensures ethical clarity and assigns responsibility to the human actors involved.

Clarity demands abandoning fear-based vocabulary. By framing AI as a tool needing better management, we can construct frameworks that balance its benefits and risks without resorting to fantasy or fear.

The algorithm is neither friend nor foe, but a powerful tool needing responsible governance.

About the author: This article is based on advocacy work in digital ethics and human rights, informed by academic research and documentation of AI dependency cases.

Reference:

- Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence (Yale University Press, 2021)

- The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power (Profile Books, 2019)

- Human Compatible: Artificial Intelligence and the Problem of Control (Viking/Penguin, 2019)

Leave a Reply